Hybrid AI Approach Slashes Energy Use 100x While Boosting Accuracy

Researchers combine neural networks with symbolic reasoning to create a dramatically more efficient framework that mirrors human problem-solving.

Researchers have unveiled a radically more efficient approach to artificial intelligence that could slash energy consumption by up to 100 times while actually improving accuracy. The method combines traditional neural networks with human-like symbolic reasoning, creating a hybrid system that mirrors how people approach problems — breaking them into steps and categories rather than relying on brute-force computation.

How It Works

Conventional deep learning models process information through massive neural networks that require enormous amounts of compute power. The new approach introduces a symbolic reasoning layer that works alongside the neural network, handling tasks like logical deduction, category classification, and step-by-step problem decomposition.

By offloading structured reasoning to the symbolic component, the neural network can focus on what it does best — pattern recognition and generalization — without having to learn logical rules from scratch through billions of training examples. The result is a system that requires dramatically less compute to achieve comparable or superior results.

Why Energy Matters Now

The timing of this research is significant. AI energy consumption has become one of the industry's most pressing concerns. Data centers powering AI workloads are consuming ever-larger shares of global electricity, with TSMC alone using 7 to 10 percent of Taiwan's total power supply.

The Iran war has further complicated the picture by driving up energy costs across Asia, where much of the world's AI chip manufacturing and data center capacity is concentrated. South Korean industrial power prices have risen 39 to 55 percent year-to-date, adding urgency to the search for more efficient AI approaches.

Part of a Broader Efficiency Push

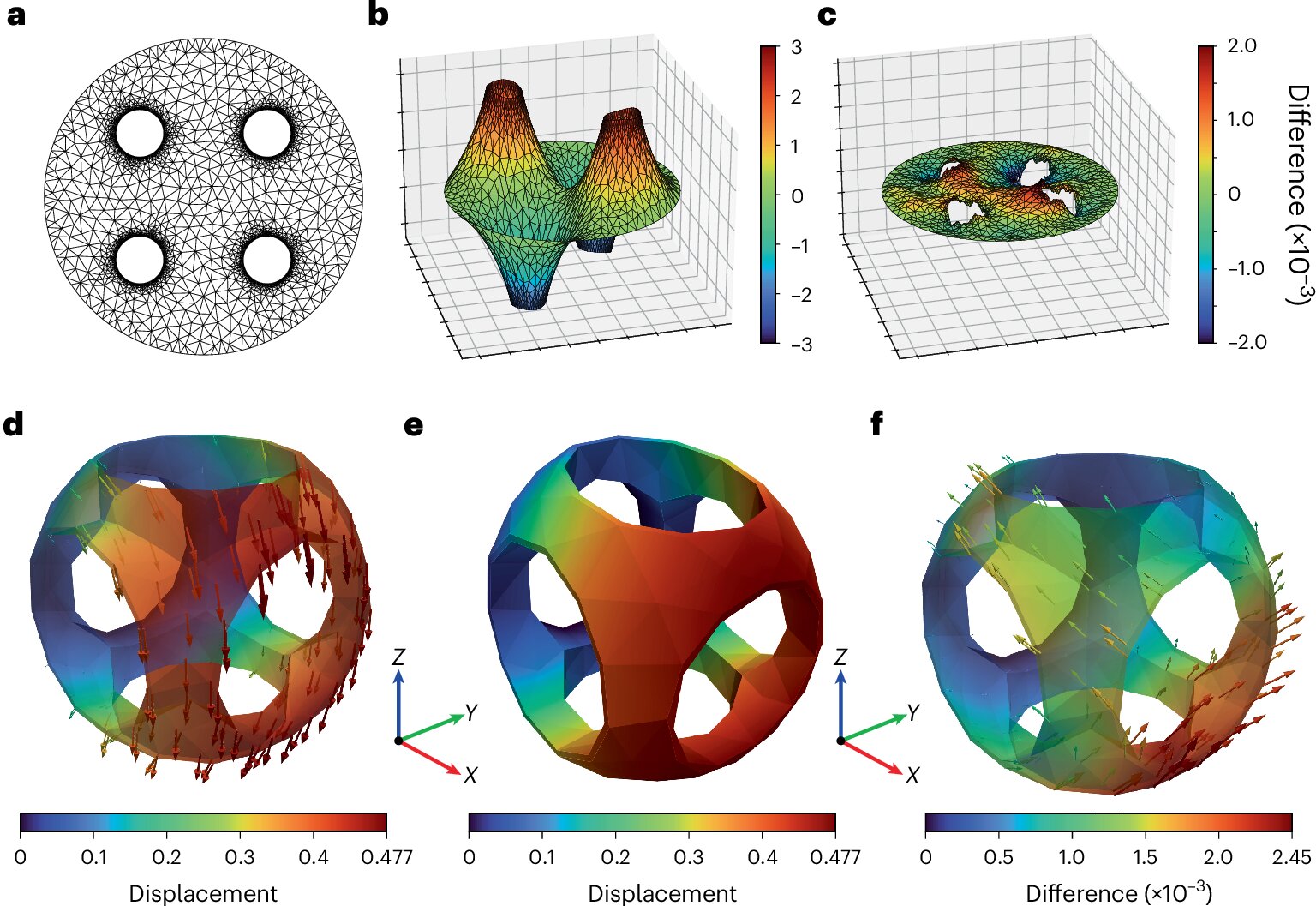

This research joins a growing body of work focused on making AI more computationally efficient. Google's TurboQuant algorithm, presented at ICLR 2026, demonstrated a six-fold reduction in memory overhead for large language model inference. Neuromorphic computing breakthroughs at Sandia National Labs have shown that brain-inspired hardware can solve physics simulations with dramatically lower energy costs than traditional supercomputers.

The trend suggests that the AI industry is beginning to compete on efficiency as aggressively as it competes on capability — a shift driven by both economic pressure and growing scrutiny of AI's environmental footprint.

Implications

If the hybrid approach validates at scale, it could fundamentally change the economics of AI deployment. Smaller organizations that cannot afford massive compute budgets could run sophisticated AI systems. Edge devices could handle workloads currently reserved for data centers. And the environmental cost of AI — a growing concern among regulators and the public — could be significantly reduced.

The research is still in its early stages, and scaling the approach to frontier-model complexity remains an open challenge. But the direction is clear: the future of AI may not be about building bigger models, but about building smarter ones.

Newsletter

Get Lanceum in your inbox

Weekly insights on AI and technology in Asia.