Google's TurboQuant Algorithm Tackles AI's Memory Wall at ICLR 2026

A novel two-step compression algorithm using PolarQuant vector rotation and quantized Johnson-Lindenstrauss projection dramatically reduces KV cache overhead, potentially shifting AI development toward efficiency-first paradigms.

Google unveiled TurboQuant at the International Conference on Learning Representations (ICLR) 2026, presenting an algorithm that substantially reduces the memory overhead from key-value (KV) caches in transformer models — one of the most significant practical bottlenecks in deploying large language models at scale.

The Technical Approach

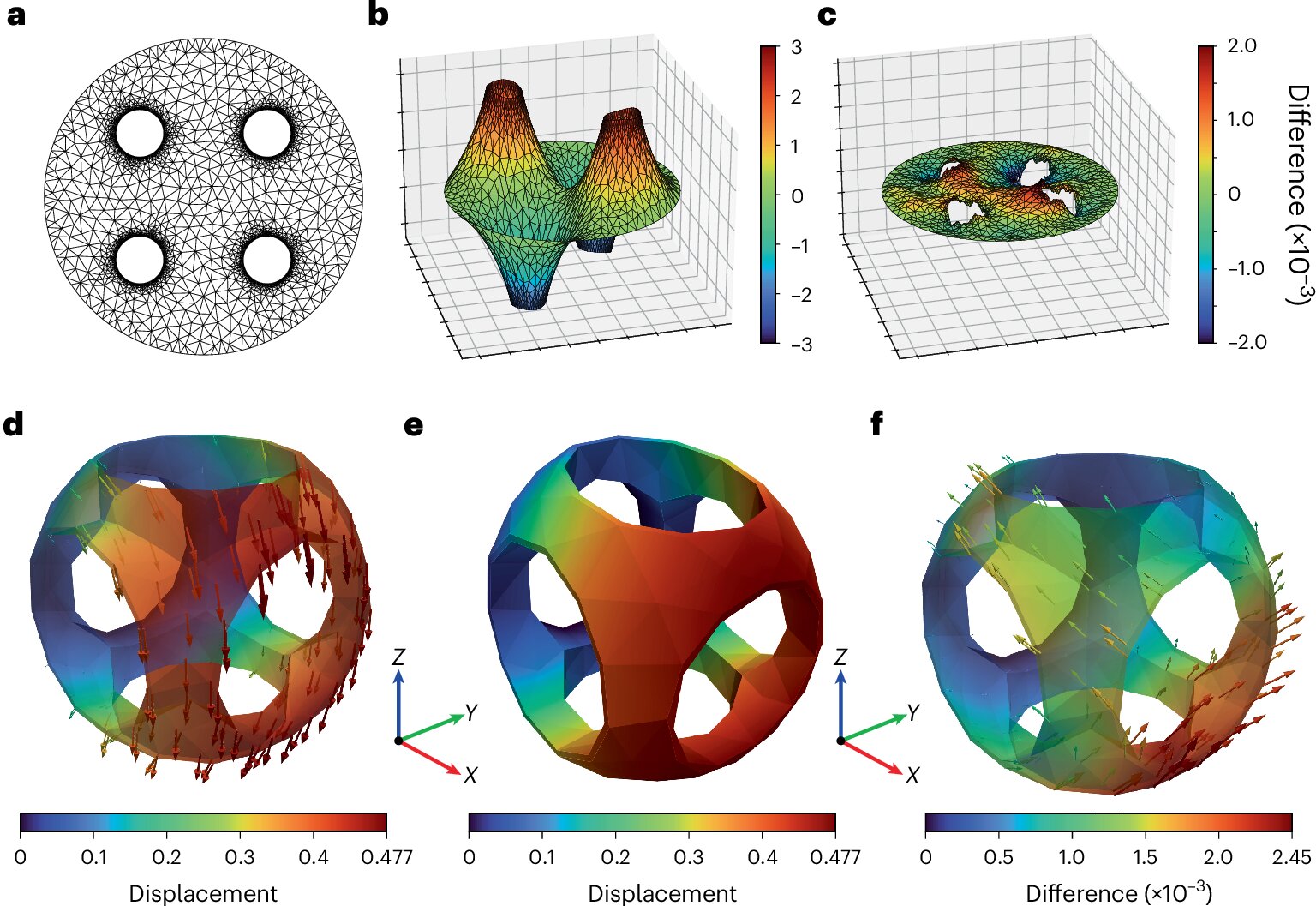

TurboQuant employs a two-step compression strategy. The first step, PolarQuant, applies vector rotation to the cached key and value tensors. This rotation aligns the distribution of values in a way that makes them far more amenable to aggressive quantization — reducing the number of bits needed to represent each value without losing the information that matters for attention computation.

The second step applies a compression technique based on the Johnson-Lindenstrauss (JL) lemma, a mathematical result that guarantees high-dimensional data can be projected into lower-dimensional spaces while approximately preserving pairwise distances. Google's quantized variant of this projection further reduces the memory footprint of the KV cache beyond what rotation and quantization alone can achieve.

The combined effect is a dramatic reduction in the memory consumed by KV caches, which in long-context scenarios can exceed the memory required for the model weights themselves.

Why This Matters

The KV cache is the mechanism by which transformer models remember what came before in a conversation or document. Every token processed adds to the cache, and as context windows have expanded from thousands to millions of tokens, the cache has become the dominant memory consumer during inference.

This memory pressure creates a direct constraint on how many users can be served simultaneously on a given set of GPUs. Reducing KV cache size by a significant factor means either serving more concurrent users on the same hardware or achieving the same throughput with fewer, less expensive GPUs.

The Shift From Scaling to Efficiency

TurboQuant arrives at a moment when the AI industry is increasingly questioning whether raw parameter scaling — making models bigger — remains the most productive path forward. The cost of training and serving frontier models has grown to hundreds of millions of dollars per training run, and the power consumption of AI data centers has become a policy concern.

Algorithmic innovations like TurboQuant suggest that substantial performance-per-dollar gains are available through efficiency improvements rather than scale increases. If the same quality of output can be achieved with dramatically less memory and compute, the economic case for pursuing ever-larger models weakens.

Competitive Context

Google is not alone in pursuing KV cache compression. Meta, Microsoft, and several academic groups have published related work on quantized attention mechanisms and sparse KV cache schemes. But TurboQuant's two-step approach — combining geometric rotation with dimensionality reduction — appears to achieve a particularly favorable tradeoff between compression ratio and output quality.

The ICLR presentation also positions Google's research team prominently in the efficiency discourse at a time when OpenAI, Anthropic, and others continue to emphasize scale as the primary driver of capability gains. Whether the industry collectively shifts toward efficiency-first development or continues scaling may depend on whether results like TurboQuant can be replicated across a broader range of model architectures and tasks.

Newsletter

Get Lanceum in your inbox

Weekly insights on AI and technology in Asia.