NVIDIA Publishes Physical AI Data Factory Blueprint on GitHub With Major Robotics Partners

NVIDIA's open-source blueprint for massive-scale physical AI data processing launches with partners including FieldAI, Hexagon Robotics, Skild AI, Uber, and Teradyne, alongside a new 700-hour healthcare robotics dataset.

NVIDIA has released its Physical AI Data Factory Blueprint on GitHub, an open-source framework for massive-scale data processing designed to accelerate the training of robots, autonomous vehicles, and other embodied AI systems. The April release arrives with an expanding partner ecosystem and a new healthcare-focused dataset that signals physical AI's push into medical domains.

The Blueprint

The Data Factory Blueprint provides a modular pipeline for generating, processing, and validating the enormous datasets required to train physical AI systems. The framework supports synthetic data generation — creating photorealistic simulated environments where robots can train on millions of scenarios without real-world risk — as well as reinforcement learning workflows that allow systems to optimize their behavior through trial and error at scale.

The architecture is built on NVIDIA's Cosmos world foundation models and integrates with the company's Omniverse simulation platform. Engineers can specify environmental parameters, object types, physics properties, and edge cases, then generate vast datasets of training scenarios on demand.

Partner Ecosystem

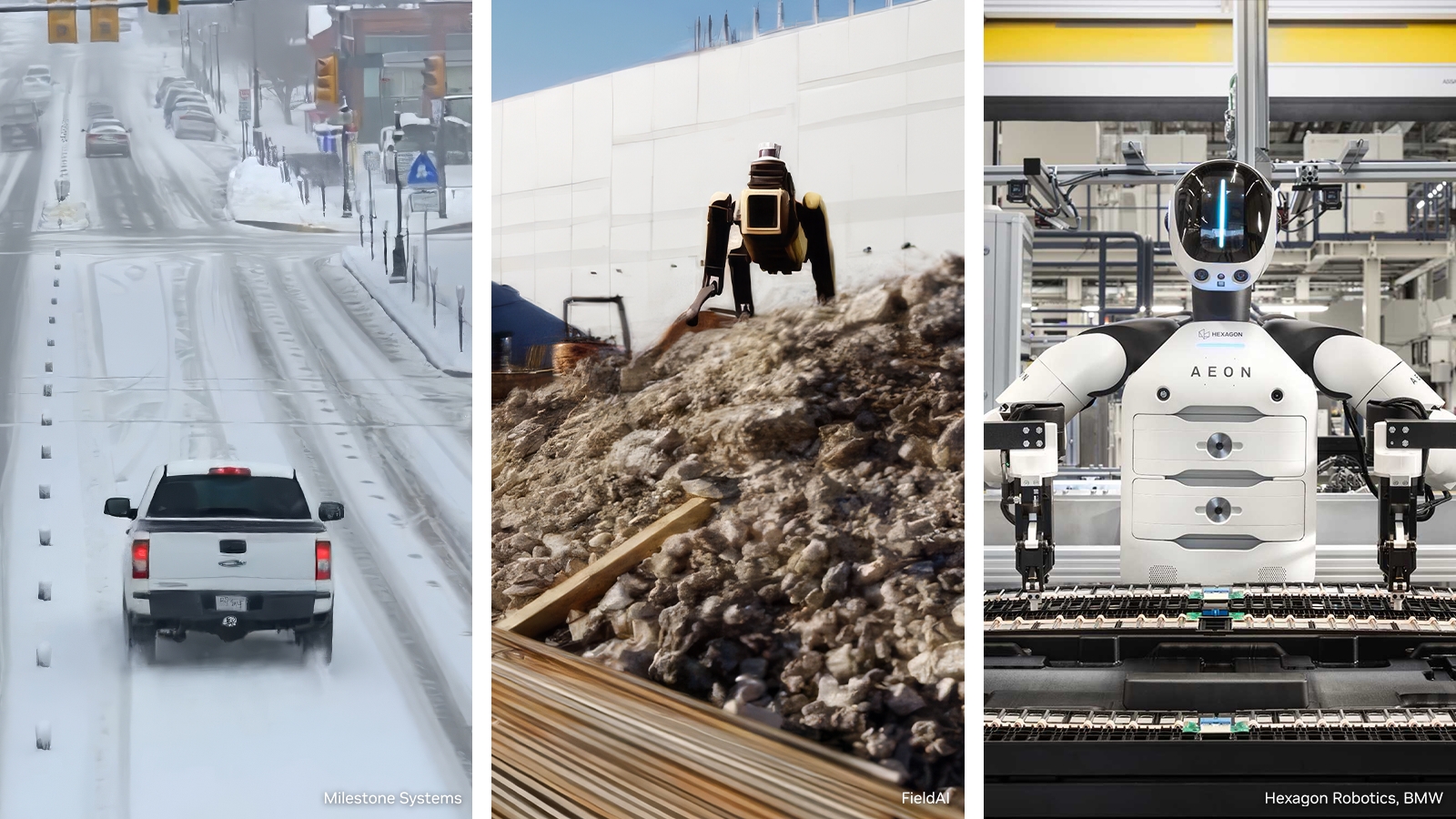

Several major companies have adopted or co-developed portions of the blueprint:

- FieldAI is using the framework for autonomous navigation in unstructured outdoor environments

- Hexagon Robotics has integrated the pipeline into its industrial automation training workflows

- Skild AI is leveraging synthetic data generation to train general-purpose robot foundation models

- Uber continues to expand its use of the blueprint for autonomous vehicle perception

- Teradyne Robotics, parent of Universal Robots and MiR, applies the framework to improve manipulation reliability

This breadth of adoption suggests the blueprint is achieving NVIDIA's goal of becoming the default infrastructure for physical AI development across industries.

Open-H Healthcare Robotics Dataset

Alongside the blueprint, NVIDIA launched Open-H, a healthcare robotics dataset containing more than 700 hours of surgical video and clinical procedure recordings. The dataset is designed to train AI systems that assist in surgical environments — from instrument tracking and tissue identification to autonomous suturing and procedural guidance.

Open-H represents one of the largest publicly available datasets for healthcare robotics, addressing a critical data scarcity problem in medical AI. Collecting surgical training data has historically been constrained by privacy regulations, institutional gatekeeping, and the sheer difficulty of instrumenting operating rooms. By releasing a curated, de-identified dataset at scale, NVIDIA is attempting to lower the barrier to entry for research teams working on surgical AI.

Strategic Significance

The open-source release follows NVIDIA's established playbook: give away the software and development tools to increase demand for the GPU hardware that runs them. Every lab and startup that adopts the Data Factory Blueprint becomes a potential customer for NVIDIA's training and inference hardware.

But the significance extends beyond commercial strategy. Physical AI has been constrained by data scarcity in a way that language AI has not — the real world does not generate neatly labeled training data the way the internet generates text. Synthetic data generation at scale may be the key to unlocking the same rapid capability gains in robotics that transformer models achieved in language.

Newsletter

Get Lanceum in your inbox

Weekly insights on AI and technology in Asia.